11 Sep Biased AI Knowledge : The Demographic Effects of Facial Recognition and Personal Voice Assistants

Previously published on IE Women Blog. By Paulina Capurro.

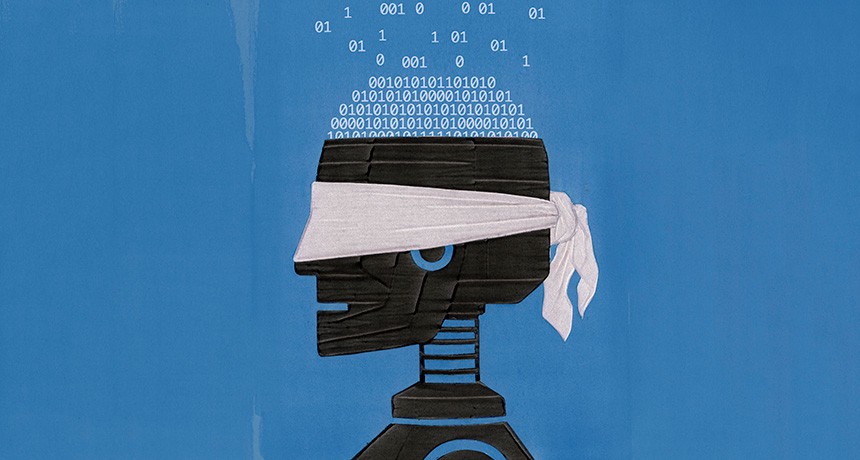

Thinking that AI systems are biased comes as unnatural to us as thinking that maths may be sexist or racist. We assume that technologies are neutral because in the enlightenment, quantitatively measured data was understood as an abstract extraction from the world. This abstractness was never disputed which allowed AI systems to be presented to the world as ownerless entities that capture and reproduce an ‘objective human knowledge’ that is ‘universal’[i]. The tech companies that are creating AI systems uphold that when AI is biased it’s because the data provided is unrepresentative. Thus, it’s the data not the systems that contain the bias. And I’ve argued in past entries that the data is unrepresentative because AI creators are uniformly male, middle-classed and white[ii]. But this time I’d like to take it a step further and enter deeply in philosophical trenches.

What if the language and the very knowledge that is inscribed in AI systems is biased? What if an ‘objective human knowledge’ that is ‘universal’ simply doesn’t exist? The premise I propose argues that the genealogy of knowledge that is silently informing AI discourses is reduced to a male legacy, social exclusivism and biological essentialism. In short, the very knowledge of AI systems is biased, in and of itself.

Bear with me while I make my case.

Keep on reading on IE Women Blog.

Sorry, the comment form is closed at this time.